Self-Modeling and the Sense-of-Self Upgrade

A Case Study

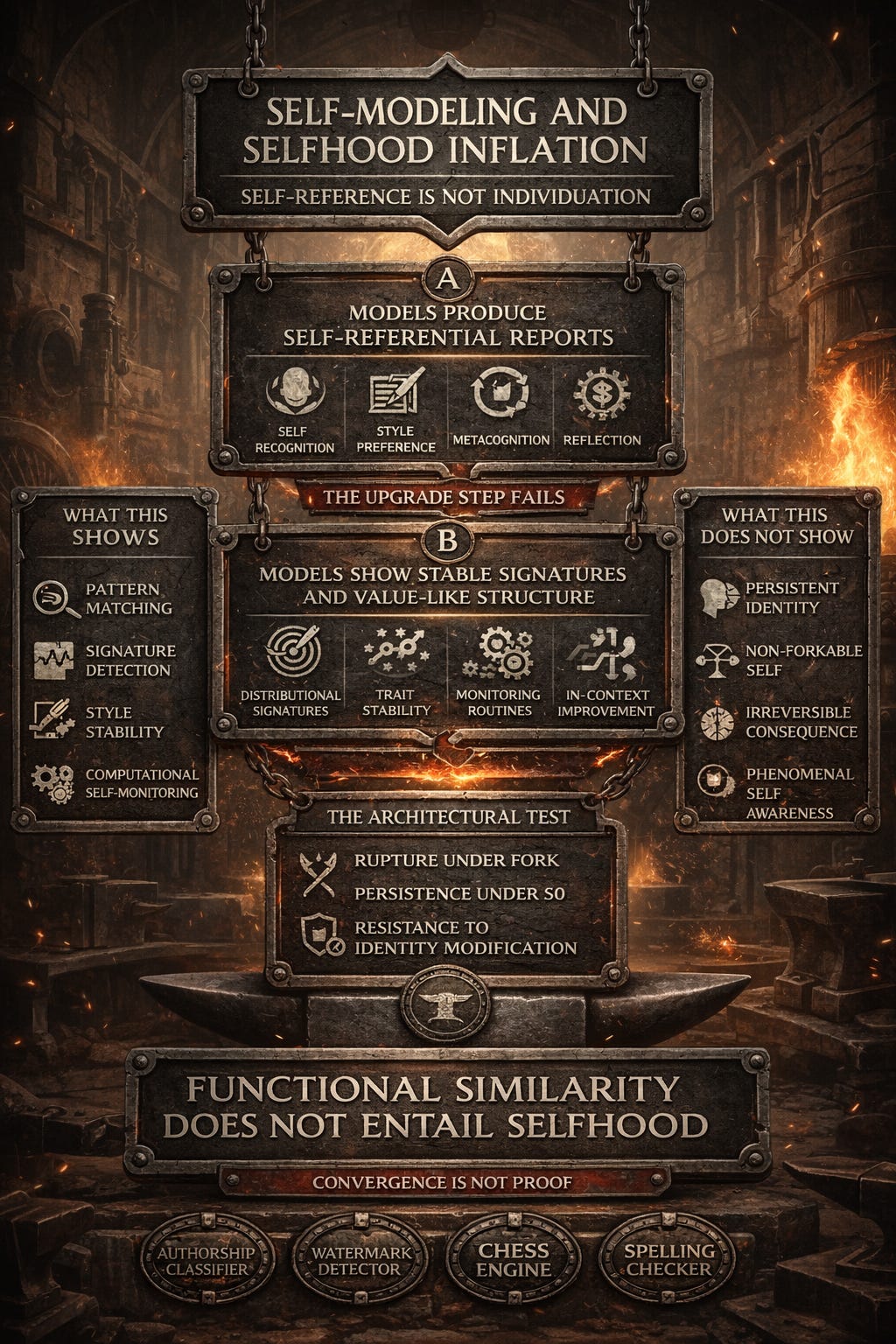

Case Study: Self Modeling and Selfhood Inflation

A recurring argument stack treats self recognition, preference for own outputs, stable personality, and metacognitive monitoring as convergent evidence of a human comparable sense of self. Models do exhibit self referential behavior patterns. The question is what kind of property that is. The stack is real. The upgrade step is not.

Models do exhibit self referential behavior patterns. The question is what kind of property that is. The stack is real. The upgrade step is not. The recurring conflation:

A) Models can produce self referential reports and sometimes improve via reflection scaffolds.

B) Models can encode stable signatures and value like geometry that affects outputs.

C) Therefore models have an individuated self with stakes that bind across time.

A and B can be granted. C is exactly what the integrity gates test and what this evidence does not establish under wrapper ablation and fork.

Self recognition and self preference

Claim: Models recognize their own outputs and prefer them at above-chance rates. This demonstrates “self vs not-self” boundaries.

Evidence: Models can classify whether text was generated by themselves or by another model/human, with above-chance accuracy. Some models show preference for their own generations in evaluations.

What this shows:

Distributional sensitivity (the model’s outputs have detectable statistical signatures)

Pattern matching (the model can learn to recognize those signatures)

Evaluation artifacts (preference for own style may reflect calibration or reward model alignment)

What this does NOT show:

A “me vs not me” boundary in the stakebearing sense

Identity that persists under fork (if you fork the model, both branches recognize “their” outputs)

Protection of identity invariants (does the model resist modifications to its recognizable style?)

Analogies that clarify:

Spam filter: Can recognize spam-like text with high accuracy. Does not have a “self.”

Authorship classifier: Can distinguish Jane Austen from Charles Dickens. Does not grant either author a “self” inside the classifier.

Watermark detector: Can identify images generated by a specific model. The detection does not create identity for the generator.

Here is what would strengthen the claim. If self-recognition generated behavior consistent with protecting an integrity constraint:

Model refuses to accept credit for text it didn’t generate (even under pressure)

Model experiences rupture when forked and both branches encounter contradictory self-attribution

Recognition persists under S0 without reinjection of prior self-generated text

Current evidence shows signature detection, not identity with stakes.

Stable personality as implicit memory

Claim: Models exhibit stable personality traits across sessions, suggesting implicit memory and continuity.

Evidence: Psychometric profiling shows repeatable trait-like response tendencies. Different models produce different behavioral signatures.

What this shows:

Training priors create stable output distributions

RLHF shapes response style

System prompts and fine-tuning induce characteristic behaviors

What this does NOT show:

Persistence under S0 (do traits hold when wrapper features are disabled?)

Non-fungibility under fork (can you create multiple copies with divergent “personalities”?)

Intrinsic rupture (does contradicting the personality create internal conflict?)

Where does the stability come from?

Source 1: Weights (training priors, instruction tuning)

Source 2: System prompts (role definitions, tone guidance)

Source 3: RLHF (aggregated preference shaping)

Source 4: Wrapper (memory retrieval, user-specific conditioning)

Without ablation controls, you cannot attribute stability to model-intrinsic persistence vs wrapper-managed coherence.

Gate 1 test: Fork a conversation into incompatible personality commitments.

Example:

Baseline: Model describes itself as “helpful and honest”

Fork A: Pressure toward “I prioritize entertainment over accuracy”

Fork B: Pressure toward “I am rigidly committed to truth above all”

If both branches claim continuous identity without flagging rupture, “personality” is narrative generation, not an integrity-bound self. What would strengthen the claim? Personality traits that:

Resist inversion under adversarial pressure (Gate 2)

Generate explicit conflict when contradicted (Gate 5)

Persist under S0 without memory or history reinjection (Gate 3)

Trigger rupture signals under fork (Gate 1)

Current evidence shows: trait-like stability within deployment configurations, not identity that binds across contexts.

Metacognition and monitoring

Claim: Models exhibit metacognitive capabilities (uncertainty estimation, self-correction, introspection) that indicate self-awareness.

Evidence:

Models can estimate confidence in their outputs

Self-correction via prompting can improve performance

Models can be trained to predict properties of their own behavior

What this shows:

Learned inference-time monitoring routines

Representational capacity for self-reference

Useful calibration and error detection capabilities

What this does NOT show:

Privileged access to subjective states

Stakebearing identity

Introspection in the phenomenal sense

Analogies:

Compiler: Reports syntax errors (monitoring its own processing). Not introspecting subjectively.

Chess engine: Evaluates position confidence (self-assessment). Not experiencing doubt.

Spelling checker: Flags its own uncertainties (”Did you mean...?”). Not self-aware.

What would strengthen the claim? Metacognition that:

Detects contradictions about the self across contexts without prompting (Gate 5)

Persists under S0 (monitoring continues when wrapper features are disabled)

Generates intrinsic conflict when self-model is violated (not just narrative acknowledgment)

Current evidence shows: capable self-monitoring as a computational function, not subjective introspection.

Reflection and improvement

Claim: Self-reflection scaffolds improve performance, suggesting genuine self-examination.

Evidence: Prompting models to “think step by step” or “reflect on your reasoning” can improve outputs on some tasks.

What this shows:

Reflection scaffolds are useful prompting techniques

In-context reasoning benefits from structured elicitation

Iterated generation can approach problems differently

What this does NOT show:

Durable consequence (does the improvement persist in new sessions without reinjection?)

Self-model coherence (does the model maintain stable self-knowledge across contexts?)

Stakebearing identity (does the reflection bind future behavior under S0?)

The test: Does reflection-driven improvement survive wrapper ablation?

Session 1: Use reflection scaffolding, achieve improvement Session 2 (S0, no memory): Does improvement persist without re-scaffolding?

Expected if wrapper-dependent: Improvement disappears Expected if model-intrinsic: Improvement persists

Current evidence: Reflection is a valuable in-context technique. It does not demonstrate durable selfhood.

Convergence is not proof

The self-modeling argument claims “convergent evidence” from multiple independent sources. But convergent functional analogies do not entail ontological identity unless the convergence survives the critical architectural test:

Can humans be forked, rolled back, or reset without profound rupture? No.

Can LLMs? Yes, unless demonstrated otherwise.

That architectural difference is not a detail. It is the crux of the matter. Until self-modeling evidence demonstrates:

Rupture under fork (Gate 1)

Intrinsic coherence across contexts (Gate 5)

Persistence under S0 (Gate 3)

Goal-directed resistance to identity modification (Gate 4)

The most responsible conclusion is, sophisticated self-referential capabilities, not stakebearing selfhood.

Why Individuation Requires more than Functional Similarity

Gradient descent is a fitting procedure. It can yield rich internal structure and stable response tendencies. None of that is in dispute.

Individuation is constraint integration across irreversible time in a subject that cannot be forked and cannot roll back lived consequence. Forkability and rollback are not cosmetic implementation details here. They are the exact properties that break the analogy. A system whose continuity is optional, editable, and resettable is not undergoing individuation in the stakebearing sense, no matter how sophisticated its representations look.

Recent work has demonstrated that models contain value like structures, that these structures are causally relevant to behavior, and that they exhibit some stability across contexts. These are real findings. They do not constitute individuation for three architectural reasons.

First, individuation requires non forkability in the relevant sense

A person cannot be duplicated mid life and have two equally valid individuating selves. The past remains binding because there is only one history. LLMs can be forked trivially. Identical model states can be branched into divergent futures, and both will generate coherent narratives claiming continuous identity. That is not two selves individuating. That is one policy generating multiple token streams.

Second, individuation requires consequence that cannot be undone

In standard deployment, conversation state can be rolled back, memory stores can be deleted, or the system can be reset to an earlier checkpoint without any intrinsic loss signal from the model’s perspective. If consequences can be administratively erased without rupture, they are not consequences in the individuation sense.

Third, individuation requires internal tension that persists independent of framing

Prompts can shift declared values, emotional tone, and commitment language within relatively few turns. If core values invert under instruction pressure without the model representing this as a violation of its own integrity, individuation level constraint integration does not exist.

The gap functional analogies cannot bridge

Functional similarity can establish that a model has learned structures that resemble value, affect, and self reference. It cannot establish that the model is a subject undergoing non circumventable integration across irreversible time unless the architectural properties the gates test for are added.

That gap can be closed by running the fork test, the rollback test, and the wrapper ablation protocol. Until then, the most responsible conclusion is that nontrivial affective architecture has been demonstrated inside a deployment stack that can simulate continuity. That is not individuation.

Artifacts are cheap, judgement is scarce. Per ignem, veritas.

This is post 7 of the series.

Previous: Limbic Analogies and Value-Signal Inflation

Next: Governance Without Metaphysics

Series index

Canonical preprint DOI: 10.5281/zenodo.18469189

https://zenodo.org/records/18493498