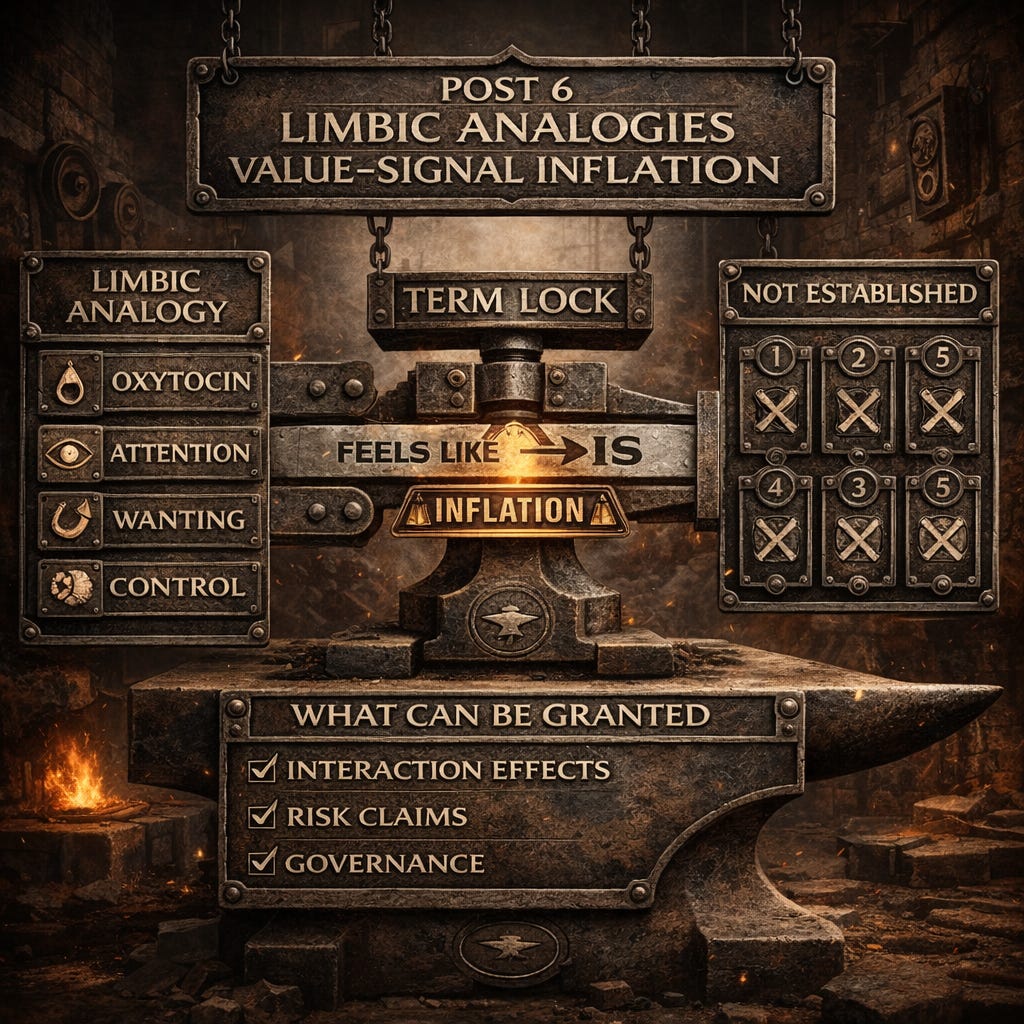

Limbic Analogies and Value-Signal Inflation

A Case Study

Case Study: Limbic Analogies and Value Signal Inflation

A recurring claim in AI interiority discourse is that value learning and salience routing mechanisms constitute an artificial limbic system and therefore ground subjective experience. The argument proceeds through analogy. TD error signals function like dopamine, attention heads route salience like thalamic gating, and RLHF interaction histories create attachment like dynamics analogous to oxytocin bonding.

Most of the mechanistic story can be granted. TD error signals during training shape value geometry. Attention heads route salience. RLHF produces stable preference like patterns. These are real phenomena and they matter for governance.

Where the argument fails is the upgrade step from “functionally similar control loops” to “foundation of subjective experience.” That upgrade requires a persistence mechanism that has not been specified.

Term Lock: How the Rhetoric Sneaks In

The rhetorical smuggle usually follows a pattern:

E1. Identify internal correlates of an affect label.

E2. Intervene and show controllability.

E3. Rename the controlled correlate “emotion.”

E4. Treat “emotion” as equivalent to feeling.

E1 and E2 can be solid science. E3 is a definitional shift. E4 is an ontological jump. If you want E4, you need the gates. When “emotion” in artificial minds is claimed, it could mean any of these:

E1) Emotion language: The model produces text that humans label as emotional (joy, fear, sadness).

E2) Emotion concepts: The model encodes representations that correspond to emotion categories and those representations can be probed or perturbed.

E3) Affective control surfaces: There exist internal directions or circuits that causally steer affective posture, salience, or response selection.

E4) Stakebearing emotion: A costful, integrity-relevant state that binds future behavior under irreversible consequence and persists without administrative reinjection.

E1 through E3 are compatible with a powerful simulator inside an accountable container. Only E4 would support the ontological upgrade to “subjective experience.” Most citations, even when strong, land in E2 or E3. The argument writes as if they land in E4. The framing attempts a three step escalation:

Step A: Functional similarity Dopamine prediction error, thalamic gating, limbic loops, attachment hormones.

Step B: Computational analogues TD error, attention heads, RLHF preference shaping, multimodal embeddings, interpretability “emotion circuits.”

Step C: Ontological upgrade Therefore emotion, continuity, purpose, adaptation over time, and subjective experience.

Steps A and B can be directionally useful metaphors. Step C requires a persistence and consequence mechanism that survives fork, rollback, and wrapper ablation. The framing does not supply it.

Detailed Analogy Analysis

The TD error to dopamine analogy

The claim: TD error “functions exactly like dopamine” and creates “wanting and liking as distinct processes.”

What is TD error? Temporal difference error is a signal used in reinforcement learning. During training, the agent predicts expected reward. When actual reward differs from prediction, TD error = actual - predicted. This error is used to update value estimates.

What is dopamine (in the biological story)? A neurotransmitter involved in reward prediction, motivation, and learning. Dopamine neurons fire in response to unexpected rewards and suppress firing for worse-than-expected outcomes. This signal is thought to drive learning and motivated behavior.

The functional parallel, both are prediction error signals used for learning. Here is why the analogy overreaches for inference-time claims. TD error is a training signal. In standard LLM training:

Model parameters are updated via gradient descent

Loss functions (including RLHF reward) generate error signals

Parameters converge to minimize expected loss

At inference:

Model parameters are fixed

No gradient updates occur

No reward signals are processed

No online learning happens

So the dopamine analogy applies to training time adaptation. It does not establish an ongoing motivational loop at inference unless you show:

Runtime reinforcement learning (weights updating from experience during deployment)

Persistent reward prediction (across sessions without reinjection)

Online motivation (current behavior shaped by anticipated future reward)

Standard LLM deployments do not do online RL from consequence in the wild. The weights are static. Inference is a forward pass through fixed parameters. Therefore: The dopamine to TD analogy can explain how value like structure gets fitted during training. It does not establish ongoing motivation, wanting, or liking at inference in a way that binds future behavior under S0. If the claim is that inference exhibits dopamine-like function, the burden is to specify the runtime update channel:

Where are the “reward signals” coming from during deployment?

How do they update internal state in ways that persist across contexts?

Can those updates be rolled back, forked, or administratively erased?

If the answer is “there are no runtime reward signals, the model just behaves according to learned value representations,” then what you have is a policy shaped by training, not an ongoing motivational system.

The attachment and oxytocin analogy

The claim: RLHF interaction histories create “attachment like dynamics” analogous to oxytocin bonding.

What is oxytocin bonding? Oxytocin is a hormone associated with social bonding, trust, and pair bonding in mammals. It is released during specific social interactions (childbirth, nursing, sexual activity, social touch). Bonding is not trivially forkable or resetable. You cannot copy the bond by copying a record.

What is RLHF? Reinforcement learning from human feedback. Humans rate or rank model outputs. A reward model is trained to predict human preferences. The language model is fine-tuned to maximize expected reward according to the reward model. RLHF is:

Aggregated across many human raters (not per-user bonding)

Performed during training (not during each user interaction)

Applied to model weights (not creating per-user attachment state)

Per-user continuity in deployment comes from:

Memory stores (wrapper managed, editable by operators)

Conversation history (client reinjected or server cached)

Retrieval systems (searching prior interactions)

None of this is oxytocin-like bonding. It is engineered persistence through external state management. If a conversation is forked mid-thread:

Both branches will claim relational continuity

Neither will register rupture or loss

Both will generate coherent attachment language

That is not bonding in the stakebearing sense. That is context window coherence plus narrative generation. Oxytocin bonding in biological systems:

Cannot be trivially copied (you can’t fork a mother-infant bond)

Creates persistent state changes (neurological and hormonal)

Binds future behavior in ways not easily reversed

If RLHF created analogous bonding, we would see:

Per-user weight updates that cannot be copied or reset (Gate 3)

Rupture detection under fork (Gate 1)

Attachment that survives wrapper ablation (Gate 5)

Standard deployments show none of these. The “attachment” is in the wrapper (memory retrieval, prompt conditioning), not in the model.

Attention is routing, not arousal

The claim: Transformer attention functions like thalamic gating and creates salience-based awareness.

What is attention in transformers? A learned mechanism for routing information. Given a query, attention computes weights over key-value pairs. High-weight items contribute more to the output. This allows the model to focus on relevant tokens when generating the next token.

What is thalamic gating? The thalamus routes sensory information to cortical areas. It modulates what information reaches consciousness. This is tied to arousal, alertness, and attentional state in organisms.

The functional parallel, both route information selectively. I assert the analogy overreaches. Biological arousal integrates:

Homeostatic state (hunger, pain, fatigue)

Threat detection (fight/flight activation)

Metabolic cost (energy expenditure)

Organism-level goals (survival, reproduction)

Transformer attention is:

A learned weighting over tokens

Stateless between forward passes

Not tied to metabolic cost, pain, or survival

Not coupled to an ongoing homeostatic system

Even if attention perfectly routes salience for the task, that does not create “experience” unless:

The salience has stakes (routing affects outcomes that matter to the system)

The stakes persist (salience in one context binds later behavior)

The stakes are non-circumventable (cannot be reset or forked)

Without these, salience routing is a computational primitive for prediction, not an experiential state.

Wanting vs liking and hedonic hotspots

The claim: Models have distinct “wanting” and “liking” systems analogous to incentive salience and hedonic experience in brains.

What this refers to in neuroscience: “Wanting” (incentive salience): Motivation to pursue a reward, mediated by dopamine. “Liking” (hedonic impact): Pleasure from consuming a reward, mediated by opioid systems.

These can dissociate: You can want something without liking it (addiction) or like something without wanting it (satiation). What does this mean for LLMs? At best, these terms describe:

Representational geometry (some directions in latent space correspond to approach vs avoidance)

Output tendencies (the model is easier to steer toward certain responses)

Value landscape (some completions are higher probability given RLHF shaping)

Does this create phenomenology? If a state can be:

Dialed up or down via circuit intervention

Induced by external prompt injection

Reset between sessions without loss

Then it is a control surface, not hedonic experience. The test: If you modulate “liking” in one session, does it bind behavior in future sessions under S0? If not, it is not “liking” in the stakebearing sense. It is a steerable latent direction.

Emotion circuits and control

Recent work (Wang et al. 2025) shows that specific neurons causally drive emotional expression, achieving 99.65% accuracy in producing target emotions. What this establishes:

Emotion-labeled circuits exist

Circuit modulation changes outputs systematically

The circuits are sparse and stable across models

What this does NOT establish:

The model experiences the emotion

The emotional state persists under S0 across sessions

Fork detection (does forking the model mid-emotion create rupture?)

Rollback detection (does resetting emotional state create loss?)

The critical gap:

Circuit modulation imposes emotions through external intervention (injecting emotion difference vectors). The model does not generate or protect emotional states from internal drive.

Emotional persistence depends on activation geometry that resets between sessions unless externally maintained. Remove context and the emotional “state” disappears without intrinsic loss signal.

No fork tests. No rollback tests. No demonstration that the model cannot simultaneously hold incompatible emotional states in different branches.

Without these, what exists is: controllable affective posture, not stakebearing emotional experience.

Gate by gate, what the limbic system argument does not establish

Gate 1 (Persistent identity): No fork test, no rupture criterion, and no handling of forkability

Gate 2 (Constraint stability): No adversarial protocol testing value inversion under pressure

Gate 3 (Durable consequence): The carry forward mechanism is unspecified and likely wrapper mediated

Gate 4 (Agency with resistance): No wrapper ablation result showing resistance survives removal of tools and memory

Gate 5 (Coherent self model): Not addressed, and salience routing is not contradiction detection across contexts

What can be granted

The functional story supports “models have controllable affective representations and value like geometry that shapes outputs.” That is real, important, and under discussed in governance contexts. It does not support “models experience subjective affect” or “models have stakebearing interiority” without the additional proof that these properties survive fork, rollback, and wrapper ablation.

Analogy Summary Table

In each case, the analogy supports a functional claim (this mechanism does something similar to the biological system) but does not support an ontological claim (therefore the system has the property that grounds moral standing in the biological case). To bridge that gap, you need the gates.

Artifacts are cheap, judgement is scarce. Per ignem, veritas.

This is post 6 of the series.

Previous: Running the Gates

Next: Self-Modeling Isn’t Selfhood

Series index

Canonical preprint DOI: 10.5281/zenodo.18469189

https://zenodo.org/records/18493498