On Nature's AI Human Level Intelligence Article

What Works, What Doesn't, What Needs To Be Called Out

What Nature is actually saying, and what the label does not buy you

The Nature piece is making a competence argument. Chen, Belkin, Bergen, and Danks are saying that if you treat humans as the paradigm case of general intelligence, and you stop loading AGI with impossible requirements like perfection, universality, humanlike embodiment, or superintelligence, then frontier LLMs already qualify as generally intelligent by reasonable standards.

Within that frame, they make their case. They align LLM competence to Turing’s 1950 era vision, and by that standard these systems clear the bar. They can sustain dialogue, solve a wide range of symbolic problems, and imitate the shape of human competence in text. There is no denying that.

They also lean on the fact that intelligence has no crisp boundary. They say there is no bright line test, and I agree with the general point. But that vagueness is exactly why labels get abused. Vague terms invite rhetorical laundering.

Using Turing as the anchor for AGI is a choice, not a law of nature. It privileges conversational plausibility and symbolic performance, and it makes language competence look like the whole field. It is not a test of life, selfhood, personhood, or consciousness, and it does not grant moral standing by itself.

This is where the label gets asked to carry more than the evidence. In some corners of the conversation, “general intelligence” gets treated as shorthand for “someone.” From there, emergent gets treated as shorthand for alive. That sequence is not a result. It is an interpretive jump that quietly swaps this is impressive into this is a being.

Before this gets reframed as moral policing, the boundary needs to be explicit. This is not a moral judgment about using these tools for emotional support. No shame. Attachment forms when something reduces pain and increases agency, especially when the medium is responsive and always available. My issue is not the relationship. My issue is the category laundering.

This is term lock. FEELS LIKE becomes IS, then the new meaning gets treated as proven. It is how it sounds empathic becomes it has empathy, and then policy follows.

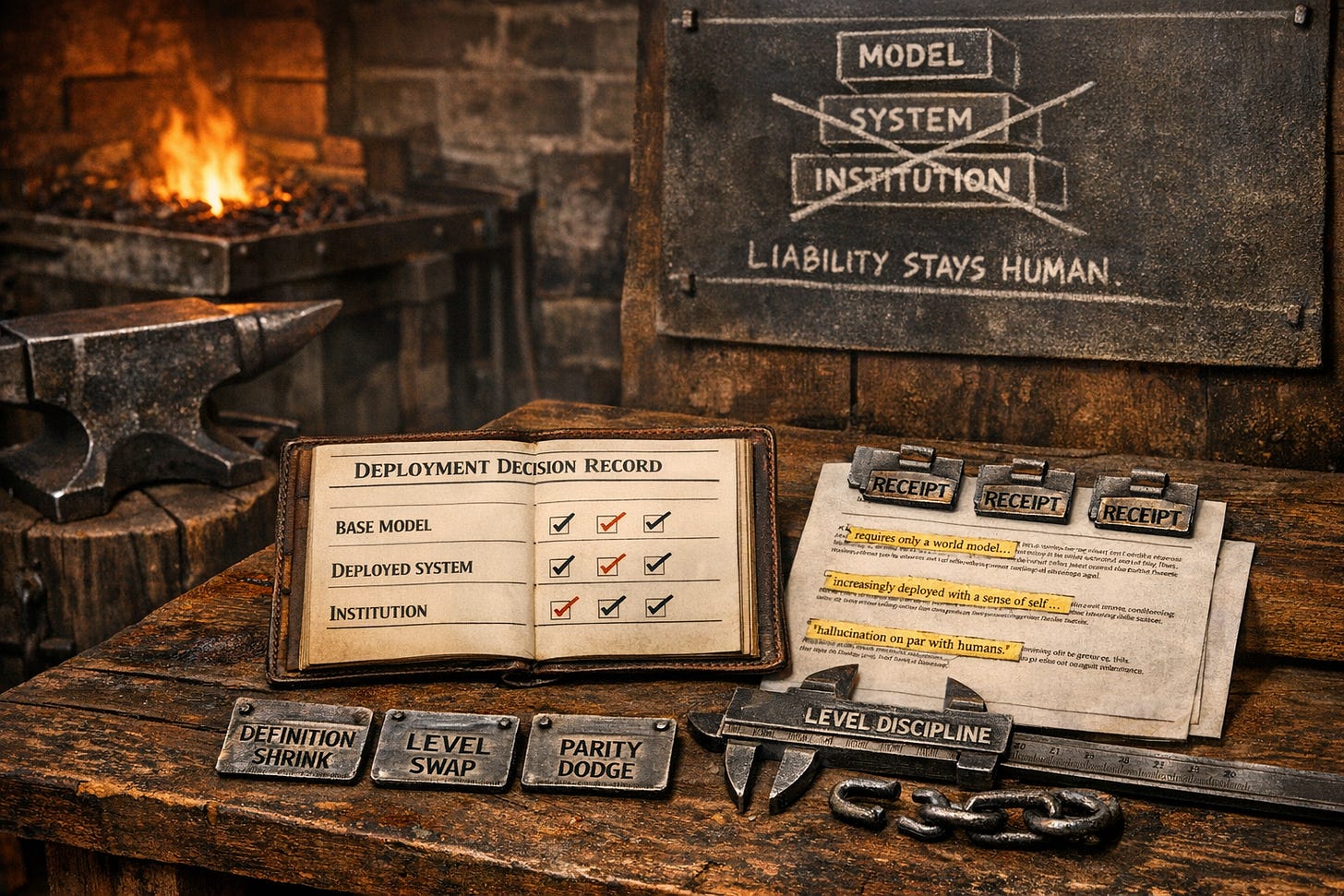

Level discipline, or the argument will lie by accident

In public-facing AGI writing, claims routinely collapse levels, and that collapse is where the laundering happens. If the level is not named, the claim is not clean, even if the author is acting in good faith. If you cannot name the level, you are not making a falsifiable claim about AGI. You are making a vibe claim about the product.

There is the base model, meaning the trained weights, the LLM itself, with no tools, no retrieval, no product memory, no orchestration, and no scaffolding. There is the deployed system, meaning wrappers, tool use, retrieval pipelines, long context, “memory,” agent runners, guardrails, and UX (user experience), which is where the demo starts to feel like a creature. Then there is the institution, meaning operators, incentives, approvals, auditability, rollback, and who holds the consequences when the system is wrong with confidence.

When a claim slides between those three levels, it starts as science and ends as marketing without admitting it changed languages.

The mechanism that keeps repeating

The ten objections section is rhetorically effective, and it is also where the swaps hide. A recurring move shows up across multiple rebuttals. The response meets the strongest form of an objection with a weaker substituted reply, then treats the objection as resolved.

Three substitution patterns cover most of what is happening. One is definition shrink, where a contested property gets redefined downward until it is easy to satisfy. Another is level swap, where an argument begins as a claim about the base model and then lands as a claim about the deployed system. The third is parity dodge, where a deployment-relevant objection gets answered with humans do it too as if that settles calibration, consequence, and correction loops.

Rather than relitigate all ten, the fastest way to test the mechanism is to look at the cleanest receipts.

Embodiment, and where the ableism lands

The embodiment rebuttal is the clearest definition swap in the whole section, and it is also where the ableism lands. The rebuttal answers no embodiment by talking about motor output, then brings in Stephen Hawking as the bridge that is supposed to make disembodiment feel intuitive. Nature’s line here is basically “Physicist Stephen Hawking interacted with the world almost entirely through text and synthesized speech,” followed by the conclusion that motor capability is separable from general intelligence.

Motor capability and embodiment are not the same thing. Motor capability is output bandwidth. Embodiment is grounding, feedback, consequence, organism-level regulation, and stakes. A body is not a peripheral. It is the system that pays the bill.

Here is where the rebuttal changes the subject. Hawking gets used as if disability and mediated communication approximate disembodiment, as if severe physical limitation makes someone closer to a brain in a vat. That framing is ableist, and it deserves to be called out right where it happens, not quietly tucked into a conclusion like a polite footnote.

Hawking was fully embodied. He was a living person with consciousness, affect, vulnerability, and continuity. His interface was constrained. His embodiment was not removed. Disability dignity means disabled embodiment is embodiment, full stop. I will not use a disabled body as an analogy for absence, and neither should anyone else.

Hawking demonstrates that intelligence does not require typical motor function. He does not demonstrate that embodiment is optional, because he remained embodied. If the goal is to rebut embodiment critiques, the rebuttal has to address grounding, feedback, and consequence directly, not substitute motor output and call it settled.

Agency, and the definitional retreat

The authors concede that present-day LLMs do not initiate goals or act unprompted like humans, then argue that autonomy is not required for intelligence, comparing the system to an oracle that answers only when queried. They say, flatly, But intelligence does not require autonomy.

That can be a coherent definitional move if AGI is meant to land as broad competence on demand. But if humans are the paradigm case, a paradigm property cannot get waved away only because it complicates the label. Humans are not passive oracles. Humans initiate, act, and self-correct through consequence, and they carry continuity and cost.

This is not just semantics. Wrap an LLM in agentic tooling and the risk class changes. Authority leaks. Deference rises. Outputs become decisions by default. Reconstructability degrades. If agency gets defined out of AGI while agentic deployments get sold as the headline, the result is governance confusion and a shifted liability story, whether or not the framing admits it.

Hallucination, and why parity is not engineering

The hallucination rebuttal leans on parity. Humans have false memories too. For deployment claims, that is not a refutation, because the relevant variables are rate, calibration, detectability, and correction loops.

Humans are embedded in feedback and consequence. LLMs do not pay costs internally unless the wrapper forces it. So parity does not touch the operational failure mode.

Operationally, knows when it does not know requires calibrated uncertainty, abstention under uncertainty, and verifiability hooks. Calibrated uncertainty means confidence tracks correctness tightly enough for high-stakes use without scaffolding, which current systems do not reliably achieve. Abstention means refusing or downgrading rather than bluffing. Verifiability hooks mean sources, checks, and tool-based validation paths, not “trust me, I sound confident.”

If those properties are not present, the parity move is a dodge, not a closure.

A concrete example of definition shrink that matters

The stochastic parrot rebuttal leans on new, unpublished problems as evidence of novelty. Unpublished is not the same as out-of-distribution. New-to-the-internet can still be in-distribution relative to training priors, problem families, and templates saturating the corpus.

If out-of-distribution generalization is the claim, the distribution has to be specified and then tested under controlled perturbations, adversarial reframing, and tool removal. Without that, the definition of novelty gets shrunk until it fits the answer the rebuttal wants.

More definition shrink shows up in the world model section. Nature reduces world model to counterfactual prediction and says that having a world model requires only the ability to predict what would happen if circumstances differed. That is the shrink. Counterfactual Q and A can be learned regularities in language, not grounded predictive control under consequence.

The rest of the rebuttals are mostly the same mechanism, with one twist

Once the substitution patterns are visible, the rest reads differently. World models get defined down to counterfactual Q and A. Understand only words gets waved away with multimodality, as if more inputs equals grounding. Sense of self gets swapped into wrapper state and product memory. Alien intelligence becomes a system claim because tool use turns the model can into the product can.

The evolutionary pre-training move is the twist. It is not really a parity dodge so much as an accidental concession. Evolution built embodied inductive biases about survival, causality, and regulation, which is exactly what embodiment critics are pointing to. That strengthens the embodiment case even if the rebuttal intends it as a dismissal.

Emergence, and why mirrors keep winning arguments they did not earn

A lot of the personhood talk rides on emergence like it is a magic key. The operational problem is simple. Many deployed commercial systems do not persist as a self outside the wrapper. If the wrapper is ablated, the self disappears. If the context window is wiped, the continuity disappears. If the toolchain is removed, the competence profile changes.

Unless the system is actively adapting and updating its weights in a way that produces enduring, individuated continuity that is not just operator scaffolding and user prompting, calling this emergence is mostly wrappers plus projection. The reflection is convincing enough that it gets granted category by feel.

Here is what would force me to rewrite this. A deployed artificial system demonstrates persistent individuated continuity across failures and resets, plus autonomous boundary defense and self-repair under real resource constraint, without operator-provided redundancy and without hidden human patchwork carrying the continuity. Not a demo. Not a wrapper. Not “the product did it.” The system.

Intelligence is plural, and language is the axis that tricks everyone

Turing is a language-centric anchor. It privileges what text systems are good at, and it makes linguistic dominance look like generality. A useful corrective is to treat intelligence as a bundle, not a scalar, and force any AGI claim to specify which bundle it means.

I am using an eight-type lens, linguistic, logical-mathematical, spatial, bodily-kinesthetic, musical, interpersonal, intrapersonal, and naturalist. If the taxonomy itself is not to your taste, fine. The taxonomy is replaceable. The structural claim is not.

To keep levels clean, the default framing here is the base model unless a level shift is explicit.

On the base model, linguistic intelligence is where LLMs dominate, and that dominance explains a lot of the confusion. Logical-mathematical competence is uneven at the base-model level. It can look impressive when the task matches learned structure, and it can fail hard on brittle discrete operations and adversarial reframing. Spatial competence improves with multimodal base models, but remains representational rather than grounded in lived spatial consequence. Bodily-kinesthetic intelligence is not present in text models at all, because it is sensorimotor coupling and learning by action in the world. Musical intelligence can be modeled structurally in symbolic space, but that is not hearing, timing, and embodied rhythm. Interpersonal competence is easy to simulate in text, which is exactly why projection spikes there, because simulation of empathy is not stake-bearing accountability. Intrapersonal intelligence involves stable autobiographical continuity and coherent interiority, and the base model does not have that. Naturalist intelligence can be strong in taxonomy and synthesis across biology and ecology literature, but it remains mediated knowledge, not embodied attunement.

Now the explicit level shift. When prompting, long context, tool use, and retrieval are added, the deployed system can compensate for some base-model weaknesses, especially in math and verification. That matters for usefulness. It does not justify sliding back into claims about the base model being an embodied subject, and it does not justify treating system competence as a warrant for personhood language.

Governance, the part that cannot be waved away

This is where the conversation stops being a vibe war and becomes an accountability problem. The risk-carrying artifact is not this essay. It is the deployment decision record.

If an institution wants to claim AGI-like capability without laundering personhood, it has to be willing to carry accountability in writing, not in marketing language. That starts with level discipline, because otherwise the organization will celebrate what wrappers and tools achieved and then blame the base model when something breaks. It also means publishing scope and disqualifiers in advance so the claim has boundaries that can actually fail, rather than expanding until it always wins.

Accountability also requires disciplined uncertainty. If the institution cannot specify where the system must refuse, where it must downgrade, and where a human must verify, governance has already collapsed into vibes and ticket queues. Verifiability has to be built into the default workflow through sources, checks, and reproducible steps, because without those hooks the institution is outsourcing confidence to a fluent generator.

None of this matters if decisions cannot be reconstructed. Decision provenance and reconstructability are the core, meaning who approved the output as decision input, what changed since the last approval, what logs exist to reconstruct a bad outcome, and who had authority to stop it. Reversal has to be real. Rollback gates and kill-switch authority need criteria and owners ahead of time, so reversal is not political theater during an incident.

Liability assignment also needs to be explicit, because “the model recommended” is the easiest way to remove human responsibility while pretending the institution increased rigor. This is the loop that keeps repeating in practice, even when everyone involved thinks they are being reasonable. Ambiguous label leads to deference, deference leads to faster deployment, deployment creates incidents, incidents blame the model, and the label stays ambiguous because it is still useful.

Minimum viable accountability looks like this. Name the level of every claim. Publish scope and disqualifiers before the demo. Require abstention rules and verification paths in the default workflow. Make decisions reconstructable with logs and explicit approvers. Pre-assign rollback authority and liability owners so incidents do not become narrative laundering exercises.

Conclusion

The Nature piece is making a competence argument. The capability is real. The overreach is what some commentary tries to hang on it, and that overreach tends to happen through sloppy level collapse and definition shrink that quietly turns a mirror into a someone.

Competence does not entail interiority. Emergence does not entail life. Do not use disability as a rhetorical shortcut to disembodiment.

Per ignem, veritas.