Leading Through AI Adoption: How to Use AI Without Losing the People Who Can Build Without It

A framework for engineering leaders navigating AI tools without gutting technical expertise

AI is supposed to make everyone 10x productive. Instead, senior engineers report feeling 0.5x, buried in review work. This isn’t a feelings problem. It’s a leadership problem with structural causes and strategic fixes.

The Problem Pattern

Inside the hype deck, AI accelerates development. Inside the IDE, it creates a different pattern:

PRs full of code that compiles but misses the point

One or two seniors doing all substantive review and architecture

Leadership watching dashboards that say “productivity up”

The senior engineer experience shifts: you used to design systems, write code, mentor juniors, and conduct reviews that sharpened the team. Now your day is cleaning up after people who prompted AI without understanding what they shipped, leaving long review comments explaining what the code actually does, and watching craft work shrink while glue work grows.

When people say “AI is draining my passion,” that’s signal. The rollout is hollowing out the work.

Root Cause: AI as Magic KPI

Most pain traces to one bad mental model: AI as magic KPI, not power tool.

You can spot this pattern easily:

Execs brag about “X percent of code written by AI”

License count becomes the success metric

Every team gets pushed to “use it more” with no guardrails

“We don’t need juniors anymore, the model is our junior”

This creates two failure modes.

AI as replacement for thinking. Code becomes whatever the model suggested first. Hope it compiles. Hope the exhausted senior catches the problems. You get more output, less engineering. Seniors become unpaid code review mops.

AI as replacement for juniors. Companies stop hiring the people who would grow into next-generation senior engineers. You schedule a talent cliff in five years when current seniors retire and nobody left learned to build from scratch.

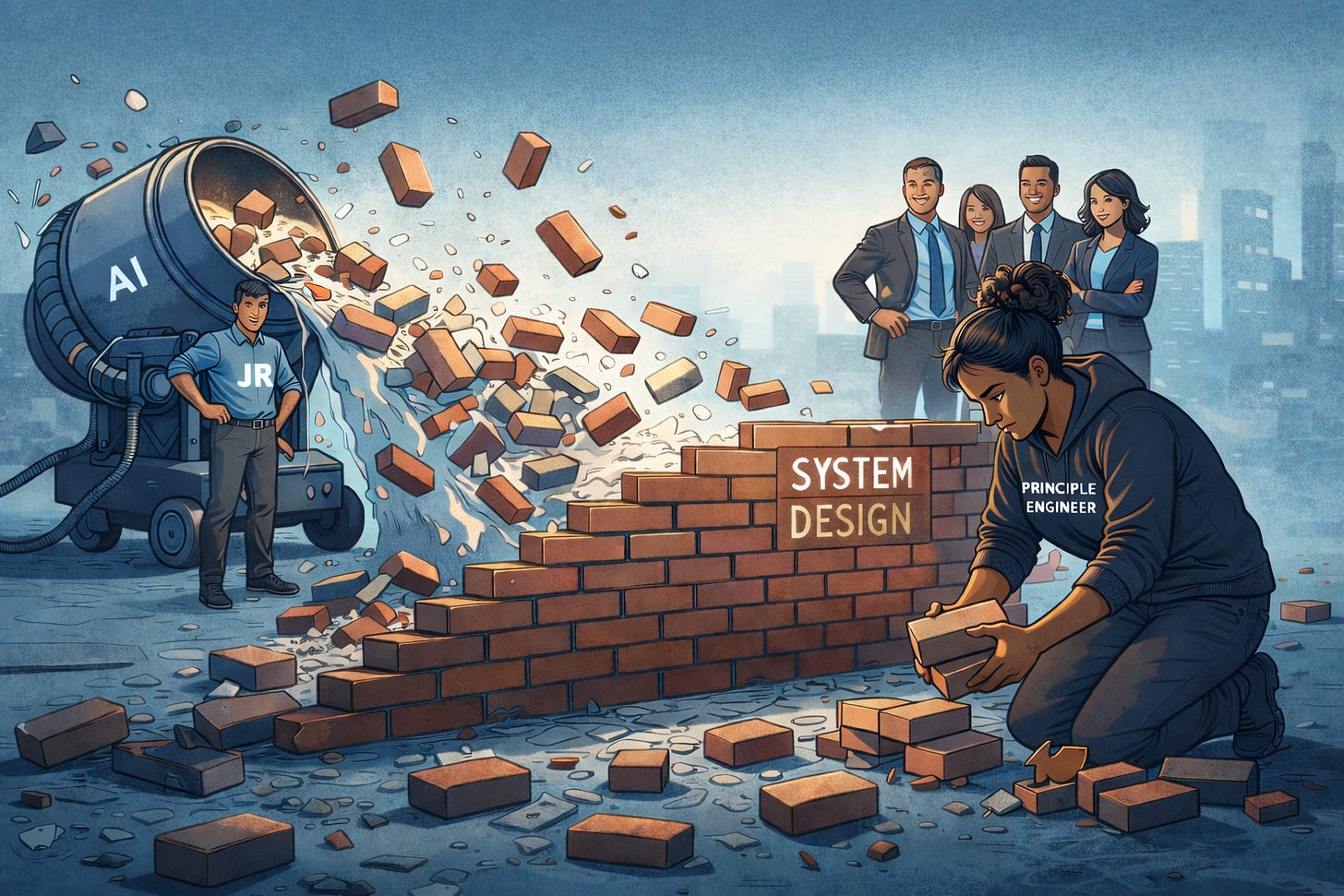

Used well, AI is a sharp junior who never gets tired. You still design the system, set standards, own what ships. The tool fills in scaffolding, tests, boilerplate.

Used badly, AI becomes the thing doing your job while you become the thing trying to understand what it did.

What Actually Breaks

A staff engineer at a fintech shipped a payment flow where AI suggested error handling that looked right, compiled clean, passed unit tests. It also logged PII on every retry.

Took three weeks to notice. Caught during compliance audit, not code review.

The engineer understood the happy path. Didn’t understand failure modes. Trusted that if it compiled and tests passed, it was fine.

The senior who caught it during audit spent two days tracing through logs to figure out scope, then another week on remediation. No one wrote a postmortem because “it was just a bug.”

That’s what happens when AI is treated as the thing doing your job instead of the thing carrying bricks while you design the wall.

Strategic Fixes

If your best engineers are telling you AI is draining the craft, treat it like a production incident. Something important is on fire. It just doesn’t show up in the dashboard yet.

This isn’t fixed with Slack reminders about “using AI responsibly.” It requires resource allocation, clear boundaries, measurement that shows drain, and keeping the talent pipeline alive.

1. Map Where AI Belongs and Enforce It

One page document.

Green work:

Test scaffolding

Migrations and transformations

Boilerplate APIs and DTOs

Internal tooling with low blast radius

Yellow work:

Feature scaffolds that need human design

Code touching non-critical customer paths

Docs, runbooks, examples

Require AI declaration in the PR

Red work:

Safety-critical flows

Incident playbooks

Core domain logic where small mistakes have huge cost

Money movement or security boundaries

Require human rewrite or pair review

Rule: if you cannot explain the AI code in the PR description, the PR closes. Not “needs work.” Closes. Reopen when you understand what you shipped.

Treat this like a security policy, not a suggestion.

For engineers managing up: “I support AI use in the right places. I need clarity on where it’s appropriate and where it’s risky. Can we define that as a team?”

2. Protect Seniors From Being AI Janitors

The problem isn’t seniors using AI as a tool. The problem is seniors spending their day cleaning up after people who treat AI as the thing doing their job.

Resource decision: Reserve roughly 30 percent of senior time for work where they control AI use:

Architecture and design

Complex refactors

Deep system understanding

Mentoring juniors on engineering judgment

Not AI-free. The senior decides if and how AI helps. But absolutely free from reviewing other people’s AI slop, reverse-engineering what a prompt meant, or salvaging thoughtless AI output.

Track it:

Is design time growing or shrinking

Is glue work moving off senior plates

Time spent on AI rework vs net new work

If design time is flat while “AI productivity” is up, you’re not leveraging seniors. You’re replacing engineering with curation.

For engineers managing up: “I’m spending more time reviewing AI output than designing systems. That’s backwards. I need X hours per week for architecture work. Here’s what ships better when I have it: [examples]. Here’s what breaks when I don’t: [incidents].”

3. Keep Hiring Juniors or Schedule Failure

When someone says “AI replaces juniors,” the answer is: No. AI is why we need more juniors, not fewer.

Reasoning:

You need people who can critique AI output

That skill develops by writing code from scratch first

If everyone on your team learned engineering with AI only, you have no one who can tell when AI is wrong

Action:

Junior headcount stays constant or grows

Each junior pairs with a senior, learns to prompt, then to critique AI output

Promotion criteria includes “can explain why an AI suggestion is wrong”

If your plan for the next five years doesn’t include junior hiring, you don’t have a plan. You have a countdown to when your last senior leaves and no one knows how the system works.

For engineers managing up: “Who will be the senior engineers in five years? If we’re not hiring and training juniors now, we’re scheduling a talent crisis. I need people to mentor, not just AI output to review.”

4. Change What You Measure

Current metrics lie:

Story points per sprint

PR velocity

AI adoption rate

Add metrics that show drain:

Design doc quality and throughput

Review depth: comments per PR, critical issues caught

Time spent reviewing AI code vs writing from scratch

Senior retention and exit interview themes

If AI is working, you should see more design docs, deeper reviews, less time on toil, and seniors staying. If you see the opposite, AI is a drain dressed up as productivity.

For engineers managing up: “I’m hitting velocity targets but quality is declining. Can we add review depth and design time to what we measure? Here’s my last two weeks: X hours reviewing AI code, Y hours on design.”

5. Treat AI Incidents as a Class

When AI contributes to a production issue, it’s not “just a bug.”

Tag it: ai_contributed in your incident system.

Postmortem adds three questions:

Where did someone rely on AI past the point of understanding

What human check should have caught this

What guardrail or checklist makes this class of error obvious

Then update your AI map and PR contract from incidents, not opinion pieces.

Escalation:

First AI-caused P0: team postmortem

Second: present to director

Third: executive brief on AI governance

Make AI cost visible.

For engineers managing up: “This is the third time AI contributed to a production issue. Can we treat these as a class of incident and track patterns?”

6. Handle Executive Pressure With Data

When the directive is “increase AI usage,” have a counter-narrative ready.

Script: “We’re at X percent AI use in safe zones. Moving to Y percent requires using it in critical paths. Here’s the incident risk and remediation cost. I recommend we optimize current usage before expanding the footprint.”

Bring data:

AI PRs that needed extensive rework

Time to review AI code vs human code

Near misses caught in review

Specific incident where AI looked right but was wrong

Make “AI usage” a nuanced metric: where used, effectiveness, review burden, incident contribution. Not a vanity number for the board deck.

For engineers managing up: If your manager won’t engage:

Document the specific ask and the refusal

Skip level: “I brought this to [manager], no movement. This affects our ability to build reliable systems.”

If still blocked: retention conversation. “I need to work somewhere that values engineering judgment, not just AI throughput.”

Bottom Line

The strategic question isn’t “how much AI.” It’s “how do we use AI without losing the people who can build without it.”

Answer that with:

Resource allocation: protect senior design time

Measurement: track drain, not just output

Career incentives: reward judgment, not prompt fluency

Talent pipeline: keep hiring juniors

AI is leverage. It magnifies weak process, bad architecture, and absent feedback. If leadership won’t fix this, engineers need to demand it, or leave for teams that will.

Artifacts are cheap, judgement is scarce.

Per ingem, Veritas