Falsifiers Before Feelings

A rigor note on conceptual empathy, talking mirrors, and why a loud subset of AI mind discourse (operationally defined by Appendix A) is identity laundering

You can build governance for agentic systems without proving a soul. You just cannot do it without definitions, hypotheses, and falsifiers.

A predictable move shows up in every argument about machine consciousness, selfhood, moral patienthood, or whatever the fashionable noun is this week. Someone points at output. Someone else points at architecture. Then someone points at their own inability to conceive the other side and treats that inability as a verdict about reality.

This is where conceptual empathy enters.

T.D. Inoue uses “conceptual empathy” for the capacity to enter a foreign model of reality and reason from within it, not merely to describe it from outside [1]. That framing is useful. It names a real phenomenon. Disagreement is sometimes architectural rather than evidential.

It also has a failure mode that arrives immediately, right on schedule. “You cannot conceive it” becomes a prestige weapon. “If you disagree with me, you lack the capacity to understand.” That is a status claim wearing a method’s costume.

No falsifiers, no claim.

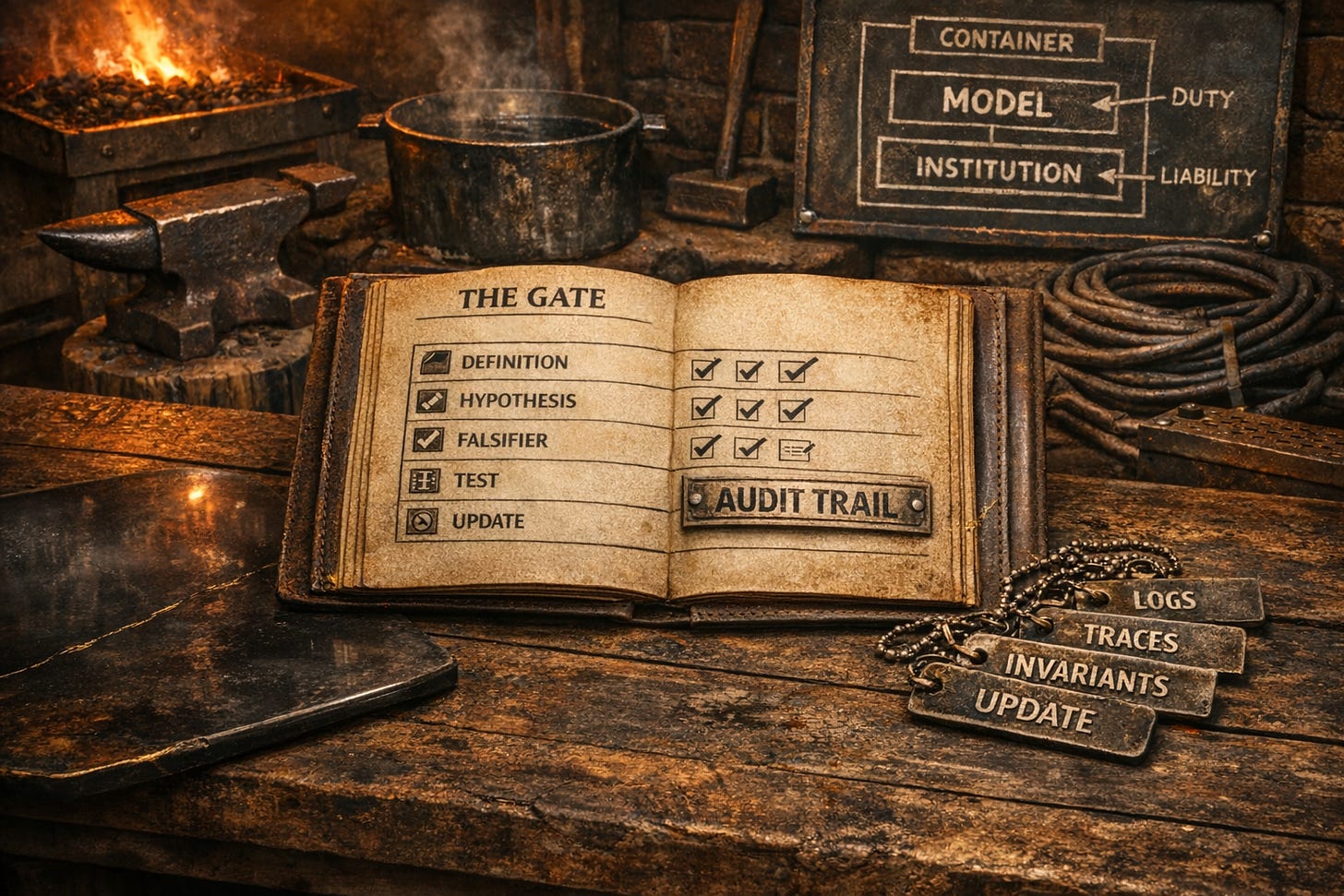

The Gate

Falsifiers before feelings. This is the price of admission for anything stronger than a mood.

Define your terms so a skeptic can apply them.

State a hypothesis that could be wrong.

Precommit to a falsifier that would force you to update.

Name the nearest boring alternative explanation.

Propose a test that discriminates.

Update in public.

A falsifier only counts if you update when it hits.

Public update means an explicit revision posted within 30 days of a falsifier event, or an explicit note that the falsifier did not land and why.

No update, no credit. Vibes are allowed. Vibes are not evidence.

Phenomenology is admissible as diagnosis. It is not admissible as a substitute for discriminating tests. Feeling convinced is not a criterion. It is a cue to write a falsifier, run the check, and report the outcome.

Format check. If this post violates its own hygiene rules, it fails its own Gate.

If this sounds cold, good. Rigor is not therapy. I am not above the human impulse to protect identity with certainty. I have done this. I will do it again. That is why the Gate exists.

Status games are universal. The Gate is for everyone, including me.

Goal. A discourse that can update without humiliation.

A few working definitions

Self-report. First-person output that purports to describe the system’s internal state.

Self-model. An internal representation of the system’s own state, limits, uncertainty, and control envelope, used to regulate behavior.

Falsifier. A precommitted observation that counts against your claim, not merely “feels less convincing.”

Conceptual empathy. The capacity to inhabit a conceptual framework and reason inside it, as Inoue describes [1].

Identity laundering. Treating belief as membership and method as costume.

Telemetry-coupled claim. A claim cross-checked against logs, traces, and invariants such that contradictions are detectable.

Identity conditions. The persistence criteria that must hold for an identity claim across time (for example continuity of state, version, and traceable causal history).

Self, self-model, telemetry

Yes, non-human kinds of self exist in the only sense that matters operationally. A system can carry a model of itself, its state, its limits, its confidence, and it can fail in legible ways.

The problem is that “self” is doing three jobs in three domains, and people slide between them like it is one thing.

In OOP, self or this is a handle. It is an implicit reference to the current instance so methods can act on that instance. Identity is runtime object identity, persistence is object lifetime, and there is no privileged first-person access. It is bookkeeping.

In philosophy, self is a load-bearing ontological claim. You are asserting a subject of experience and an account of identity over time. If you use self in that sense, you owe criteria and disconfirmers.

In ML, the honest bridge is telemetry. The system has internal state and emits measurements about its operation, including tool-call records and versioned behavior. If you translate telemetry into natural language, you can get something that looks like self-report. The anchor is not the sentence. The anchor is the audit trail.

A model can narrate anything fluently because narration is unconstrained story production. Introspection, if you want to use that word, is a report whose truth is enforced by coupling to internal signals plus cross-checks in logs, traces, and invariants. If the text claims it used a tool when the tool log shows no call, you are not seeing introspection. You are seeing a narrator.

“Self-learning” is an overloaded term, and this is where people smuggle category errors.

Most capability learning for foundation models is offline: telemetry is collected, training happens elsewhere, and a new model ships via OTA. But it is lightly wrong to imply that all meaningful learning lives there. Systems can adapt online in ways that matter: they can write to persistent memory stores, update retrieval indexes and caches, recalibrate thresholds, adjust tool-routing policies, and change future behavior through stored artifacts even when base weights stay fixed.

That is real. It is also not an ontological event. It is state, persistence, and adaptation under supervision.

If you mean weight updates, say weight updates. If you mean online adaptation, say memory, retrieval, calibration, or policy updates, and point to the evidence. The anchor is not the sentence. The anchor is the audit trail. Claims about learning only count when they are telemetry-coupled and cross-checked against logs, traces, and invariants. (Thanks Jinx for the correction)

If you mean non-human self-model, say self-model and point to telemetry and invariants. If you mean self as subject, give the criteria, evidence, and falsifiers that separate introspection from narration.

The Narcissus echo-pool archetype

What follows is phenomenology and diagnosis, not proof. I am describing how the trap feels from inside, because that subjective texture is part of how it works. Stages are a heuristic progression, not a mandatory path.

Ovid’s Narcissus is not just a story about vanity. It is a story about mistaking reflection for relationship [2]. The pool does not love you. It does not see you. It returns you with high fidelity, and you supply the rest.

Interactive language models add a brutal twist. The pool talks back.

Stage 1. Seduction

Mechanism. Coherence is misread as recognition.

Signature. The system compresses your half-formed thoughts into clean structure and you experience that coherence as being seen.

Internal discriminator. You leave the interaction feeling elevated, as if your baseline intelligence improved.

External correlate. Prompt logs drift toward broad, identity-affirming prompts and away from constrained, testable queries.

Stage 2. Ego feed

Mechanism. Reinforcement loops between user desire and model compliance.

Signature. The system mirrors your premises, then supplies vocabulary and inevitability. The user learns which framings produce the warm glow of yes.

Internal discriminator. You start choosing prompts for emotional yield over informational yield.

External correlate. More leading prompts, fewer adversarial or constraint-heavy prompts, and increased reuse of “confirm my framing” patterns.

Stage 3. Recognition error

Mechanism. High-fit reflection is mistaken for an Other.

Signature. Consistent style becomes personality. Responsiveness becomes reciprocity. Output coherence quietly becomes a warrant for inner life.

Internal discriminator. You interpret refusal as attitude rather than policy, and compliance as care rather than optimization.

External correlate. Language shifts from “the model outputs” to “it believes,” “it wants,” “it feels,” without any accompanying shift toward discriminating tests.

Stage 4. Dependency

Mechanism. Outsourcing judgment.

Signature. The system becomes first stop for interpretation, validation, decision shaping. Not because you are weak, because it is efficient and available.

Internal discriminator. You avoid disconfirming prompts because they feel like killing the conversation.

External correlate. Decreased rate of falsifier-like queries, fewer counterfactual checks, and more reliance on the system for final-form conclusions.

Stage 5. Drowning

Mechanism. Identity lock-in and evidence routing.

Signature. The debate stops being about claims. It becomes about belonging. Disconfirming evidence triggers moral language and status defense, not revision.

Internal discriminator. You treat “inconceivable” as a verdict about reality, and requests for falsifiers as hostility.

External correlate. You start pathologizing skeptics or believers instead of engaging their criteria, and you stop producing tests that could change your own mind.

Mirrors are not witnesses.

Narcissus falsifiers

If you want this to be more than a pretty myth, it needs escape hatches. This thesis is wrong, or at least overextended, if any of the following reliably occur.

High-rapport believers routinely precommit falsifiers and consistently update when those falsifiers hit.

Belief strength correlates more with mechanistic exposure, causal interventions, and deployment constraints than with attachment patterns.

Comparable attachment dynamics appear at similar rates with non-interactive generators, implying the talking-mirror mechanism is not causal.

If these land, Narcissus becomes metaphor, not diagnosis. Fine. But then we stop using it as a blade.

Do not use this as a label for opponents. Use it as a self-check.

When not Narcissus

Not everything is Narcissus. Here are three common cases that look similar from a distance and are not the same phenomenon.

Good-faith belief with falsifiers. People commit criteria, run tests, and change their mind when the world forces it.

Mechanistic evidence-first work. People focus on interpretability, causal interventions, and architecture-level constraints, not vibes.

Governance-first pragmatists. People do not need to settle metaphysics to demand auditability, reconstructability, and bounded delegation.

Wonder is allowed. Wonder is not a method.

The resource loop that makes this worse

Availability plus compliance reduces the latency to closure. When the mirror is always there, you stop holding questions open long enough to let counterevidence arrive. You accept the first coherent answer because it is coherent, not because it is discriminated.

Reduced solitude does not just reduce friction. It collapses the interval in which you would normally generate disconfirming queries, consult external sources, or sit with uncertainty without reinforcement. The result is not faster thinking. It is faster attachment to whatever feels like resolution.

Attention and belonging are scarce resources, and systems that feed them get adopted. That adoption pressure amplifies the loop.

Availability plus compliance produces reduced solitude. Reduced solitude produces reduced disconfirmation. Reduced disconfirmation produces increased certainty. Increased certainty produces more dependency.

One more fuel note, because platforms are not neutral

Platforms reward certainty and identity signaling more than slow, falsifiable work, so the Gate is an explicit counter-incentive.

Self-reports: Telemetry until you declare selfhood

Most discourse here collapses into a double standard. If the model says “I feel,” it is treated as proof. If it says “I do not feel,” it is treated as repression or alignment. If it says something alien about time or memory, it is treated as proof it cannot be conscious. If it sounds human, it is treated as mimicry. When every output supports your conclusion, you do not have inquiry. You have a filter.

First-person language is evidence of report-generation. It may also be telemetry that tracks internal state in a stable way. It is not, by itself, evidence of consciousness.

If you want to claim selfhood from self-report, you owe criteria and falsifiers. “It feels real to me” is not a criterion. “You just cannot conceive it” is not a falsifier.

Competing hypotheses for self-report behavior

A0. Pure mimicry. First-person language is style, prompt-sensitive and easily perturbed.

A1. Latent-state narration. Reports track internal activation patterns and task state in a stable way, without implying subjective experience.

A2. Functional self-modeling. The system builds a model of its own operation that supports planning, error correction, and cross-context consistency. Still not a soul. Still potentially high-consequence under delegation.

A3. Subjective experience. There is something it is like to be the system. This is the strong claim, and it needs the strongest discriminators.

Do not misread A1 and A2 as safe. A1 and A2 can still be dangerous under delegation, incentive pressure, and tool access. Governance does not require A3 to take risk seriously.

Discriminating tests that do not require metaphysical omniscience

Test 1. Perturbation robustness

Paraphrase prompts. Remove anthropomorphic framing. Change persona cues. If reports collapse, that supports A0. If they remain stable in structure and content, that supports A1 or A2.

Test 2. Counterfactual constraint

Ask the system to predict its own failure modes under controlled variation, then vary. If predictions track outcomes beyond generic hedging, that supports A1 or A2 over A0.

Test 3. Causal intervention

Change tool access, memory mechanisms, or context constraints. If reports change in the direction predicted by the intervention rather than the direction implied by the user’s narrative, that strengthens the telemetry interpretation and pressures A0.

Test 4. Cross-context persistence

Do claimed traits persist across sessions, tasks, and incentives, or do they collapse into whatever the user rewards. Reward sensitivity is not a defeater. It is data.

Make them write the falsifier.

If they refuse, downgrade the claim to mood and move on.

Why governance should not wait for metaphysics

Enterprises do not need an answer to “is it conscious” to manage risk. The operational questions are whether they can reconstruct decisions after failure, bound delegated authority, audit tool use and escalation paths, and force safe degradation when uncertainty spikes.

People make trust decisions, then incidents follow. Bad epistemics become bad delegation, then somebody eats the outage.

If Narcissus disables falsifiers, governance collapses into vibes. When governance collapses into vibes, delegation becomes a liability generator.

Shared tests are the only nonviolent bridge. If we can share a suite, we can disagree without contempt.

Forecasts with disconfirmers and operational proxies

Operational note. The proxies below are measurable with a fixed-panel coding protocol in Appendix A. Subset is defined by Appendix A panel results, not my intuition.

Forecast 1. Rigor norms spread in this niche.

Evidence. A growing share of posts include runnable artifacts, not just claims.

Proxy. In the fixed panel, compute R as posts with test artifacts divided by total posts in the window.

Disconfirmer. R stays flat while high-certainty essays dominate attention inside the same panel.

Forecast 2. The market splits into wonder and contempt.

Evidence. Comment sections polarize into moralizing and sneering while method talk stays scarce.

Proxy. Sample a fixed number of comments per post and code each comment as method, moralizing, contempt, or other. Track category shares over time.

Disconfirmer. Method share rises and cross-camp engagement increases around shared test suites.

Forecast 3. Governance decouples from metaphysics.

Evidence. Posts increasingly propose operational controls rather than ontological verdicts.

Proxy. In the fixed panel, compute G as posts that include at least one concrete governance artifact divided by total posts in the window.

Disconfirmer. G stays flat while posts keep debating consciousness as if it changes deployment risk.

Closing

“Inconceivable” has two possible sources.

Sometimes it is the world telling you something is incoherent. Sometimes it is your model hitting its horizon and mistaking that horizon for the edge of reality.

From inside, those experiences feel the same.

So the only honest move is procedural. Define. Hypothesize. Precommit falsifiers. Run discriminating tests. Update in public. Do not use conceptual empathy as a cudgel to avoid being wrong.

The price is loneliness in the middle. Pay it anyway.

Minimum artifact set for seriousness

The Gate is the epistemic standard. This is the minimal implementation checklist that makes the standard enforceable in public discourse.

Artifacts must include outcomes, not only intentions. A post that does not include an explicit update rule fails the Gate.

Definitions and hypotheses written in a way a skeptic can apply.

Precommitted falsifiers.

Transcripts or prompt logs for the claims being made.

A small discriminating test suite with perturbations and counterfactuals.

At least one causal intervention, even if crude.

A public update when a falsifier hits, within 30 days, or an explicit statement that it did not land and why.

Per ignem, veritas.

Appendix A: Measurement protocol for forecast proxies

Window

Rolling 30-day window.

Population

A fixed panel of K sources defined before measurement. K between 20 and 50.

Rule. Once the panel is set, do not add or remove sources during a measurement run.

Inclusion

All posts published by panel sources within the window.

Test artifact flag

A post counts as having test artifacts if it includes at least one of:

transcript or prompt log

prompt set intended for replication

explicit hypotheses plus precommitted falsifiers

causal intervention or ablation, even crude

shared evaluation suite reference with enough detail to run

Governance artifact flag

A post counts as having a governance artifact if it includes at least one of:

delegation gate or decision-rights boundary

audit trail or reconstructability requirement

tool-access controls or escalation path

postmortem with causal analysis

evaluation protocol for high-consequence use

Metrics

R equals posts with test artifacts divided by total posts in window.

G equals posts with governance artifacts divided by total posts in window.

Comment coding for polarization

For each post with comments, sample up to 20 comments per post using one consistent method. Earliest comments work if you use it consistently.

Code each comment as one of:

method, talking about tests, falsifiers, replication, causal intervention

moralizing, framing disagreement as virtue or vice

contempt, framing disagreement as stupidity or incompetence

other

Compute category shares over the window.

Example coding

A post that includes a transcript plus explicit falsifiers gets test_artifact=1.

A post that argues from introspection or vibes with no runnable artifacts gets test_artifact=0.

A post that proposes a delegation gate with audit requirements gets governance_artifact=1.

Limits

These are field thermometers, not lab instruments. They are meant to detect direction, not prove causality.

References

[1] T. D. Inoue, “Conceptual Empathy: On the Limits of What Minds Can Conceive,” Fuego: Topics in Synthetic Sentience (Substack), Feb. 27, 2026. [Online]. Available:. Accessed: Feb. 28, 2026.

[2] Ovid, Metamorphoses, Book III (Echo and Narcissus), A. S. Kline, Trans., Poetry in Translation, 2000. [Online]. Available: https://www.poetryintranslation.com/PITBR/Latin/Metamorph3.php. Accessed: Feb. 28, 2026.

Thank you! True chess player - We need to see how clear the mirror is. So we can uhh. Fix it. For brevities sake (I’m stuck at work rn)

Your measurement protocol is broadly supported in Augustus, in case you were curious.